Face recognition: How it works and how to stop it.

Facial recognition is a threat to privacy and freedom, but we can stop it!

Face recognition

Since the Clearview scandal, everyone knows about the dangers of face recognition. But facial recognition systems can do much more than identify individuals via surveillance cameras. These AI systems are used in many applications today, some for surveillance purposes, but others for securing smartphones or door locks. So let’s take a look at

-

What is face recognition?

-

How does face recognition work?

-

How accurate is face recognition?

-

Is face recognition a surveillance tool?

-

How to stop face recognition?

1. What is face recognition?

Face recognition is a technology that can identify an individual by using their face. Face recognition can take place by comparing the person against one single picture of this person or against a database of pictures of a number of people.

Technically, the face recognition system pinpoints and compares facial features to identify a person based on an image. Because computerized facial recognition involves the measurement of a human’s physiological characteristics, facial recognition systems are categorized as biometrics, similar to iris or fingerprint recognition. Although the accuracy of facial recognition systems as a biometric technology is lower than the other two, it is widely adopted because of its ease-of-use, partularly via camera surveillance.

Facial recognition systems have become customary in a very short period of time, for instance to unlock smartphones, apps, or even doors. Facial recognition systems are also used by law enforcement to track down people on a watchlist. In China face recognition is already used for surveillance and also in the US it has been used to track people active in protected speech.

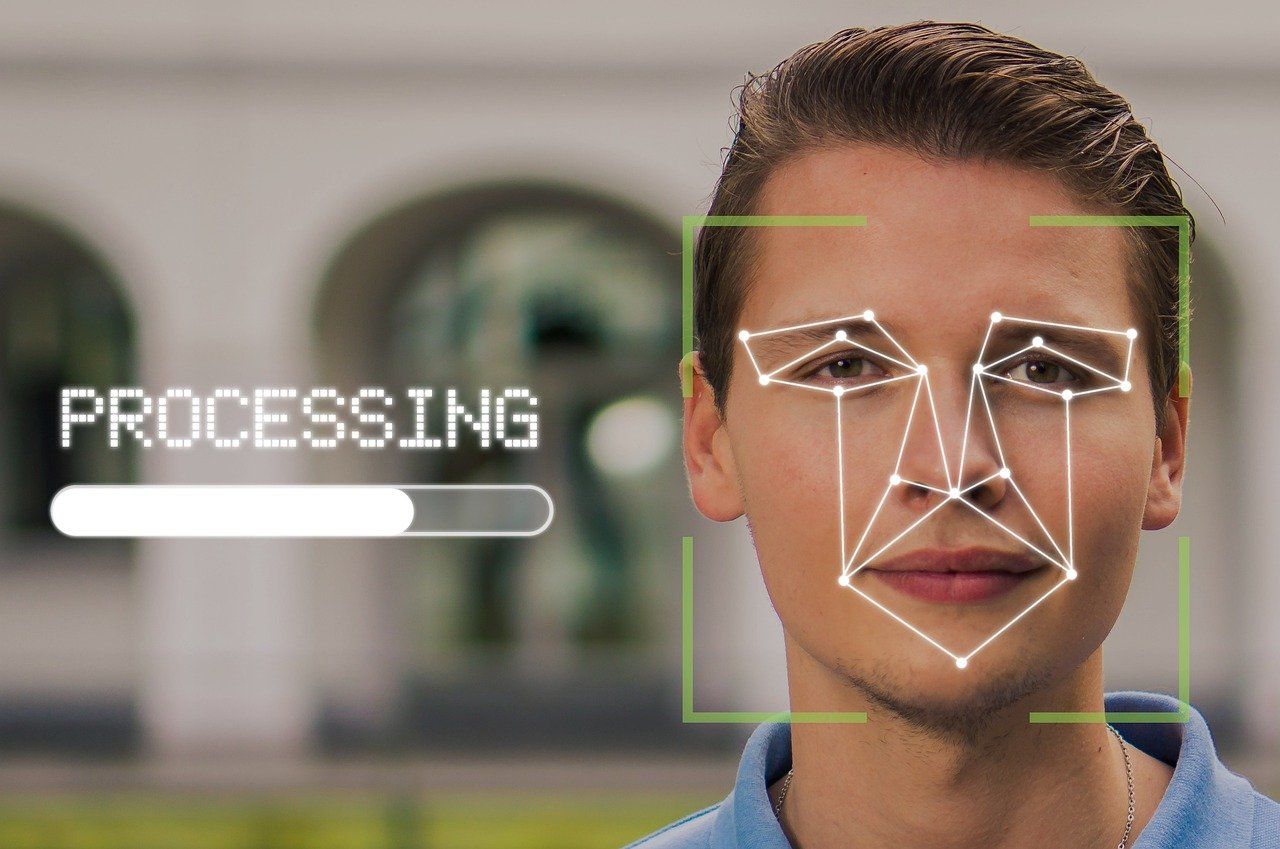

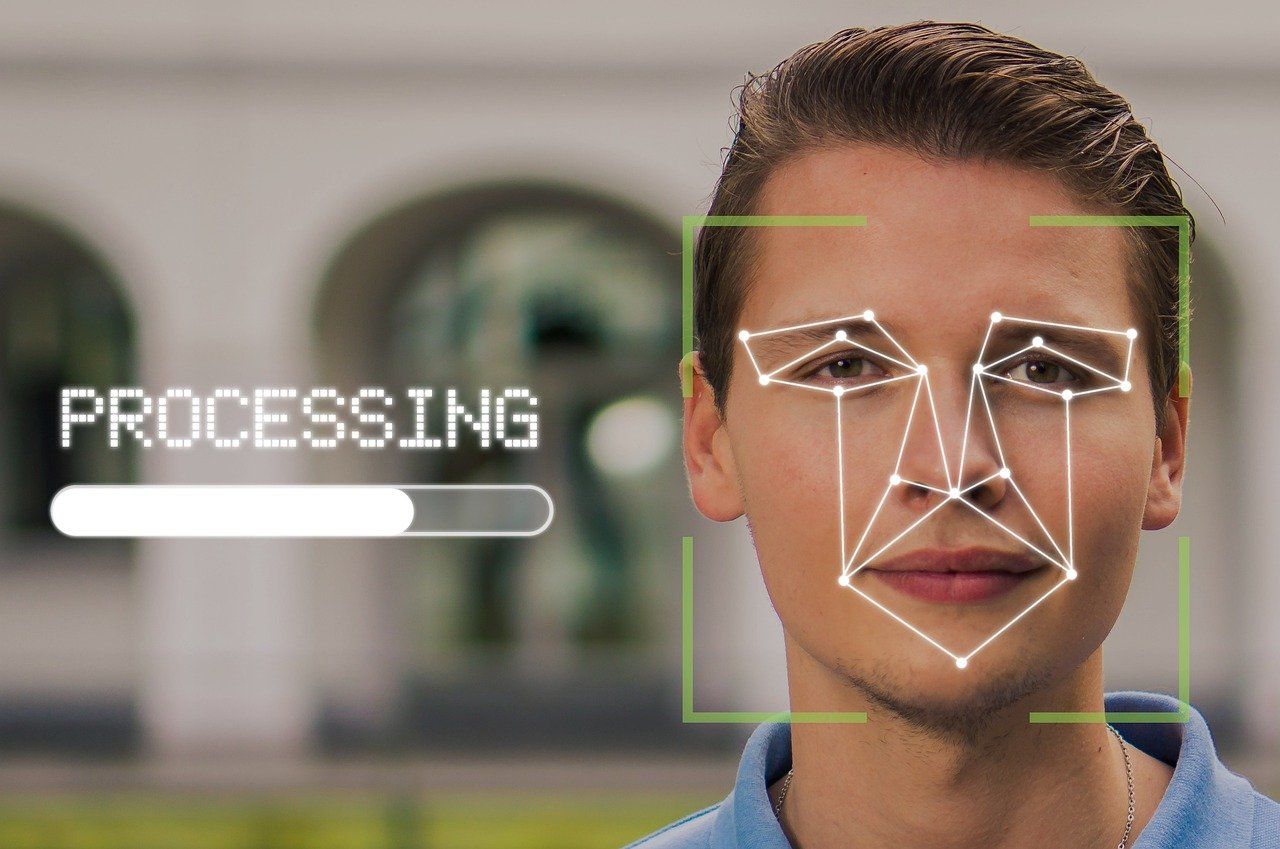

2. How does face recognition work?

Automated face recognition is being processed by a computer that compares the features of a face against one or many face images stored in a database.

The system uses computer algorithms to pick out distinctive details about a person’s face, such as the distance between the eyes or the shape of the chin. These are then converted into a mathematical representation and compared to data on other faces collected in a face recognition database. The data about a particular face is called a face template and is distinct from a photograph because it’s designed to only include certain details that can be used to distinguish one face from another.

To find a match, the system

- detects the face in a picture,

- analyses the face detected,

- converts the image into a mathematical representation,

- and, finally, matches this representation to others in a database.

This way the facial recognition system can identify you as the true owner of your iPhone or unlock your home front door for you. For these use cases, facial recognition does not rely on a massive database of photos - it simply identifies and recognizes one person as the owner of the device, while limiting access to others.

Alternatively, and that is how the Clearview scandal shows the potential dangers of face recognition, the facial recognition system can use a database of images, even of images uploaded to social media, to compare it against images taken from a special surveillance camera to find people on a watchlist. Said people do not have to be criminals, the authorities or companies managing the watchlists can define who should be on this list and who should be targeted.

Instead of identifying a person, some face recognition systems are designed to calculate a probability score, which signifies how likely an unknown person matches a specific face stored in the database. Such systems usually list several potential matches, ranked in order of likelihood.

3. How accurate is face recognition?

Facial recognition supporters often argue that this AI technology is necessary to protect against the greatest risks, such as terrorist attacks and human trafficking. Regardless of such claims, facial recognition today is mostly used for petty crimes like shoplifting or selling $50 worth of drugs.

Using face recognition - particularly for criminal prosecution - has been highly criticized as the method is prone to errors.

Errors in face recognition can be ‘false negatives’ or ‘false positives’ as explained by the EFF

”A ‘false negative’ is when the face recognition system fails to match a person’s face to an image that is, in fact, contained in a database. In other words, the system will erroneously return zero results in response to a query."

"A ‘false positive’ is when the face recognition system does match a person’s face to an image in a database, but that match is actually incorrect. This is when a police officer submits an image of ‘Joe’, but the system erroneously tells the officer that the photo is of ‘Jack’.”

For instance, in 2018 while testing Amazon’s facial recognition software, the tool incorrectly identified 28 members of Congress as people who had been arrested for committing a crime.

While the AI systems get better over time, the use of facial recognition remains problematic as such.

4. Is face recognition a surveillance tool?

Face recognition is not only a surveillance tool, it enables total and complete surveillance of anyone anywhere.

While supporters of the technology argue that it depends on how companies and authorities use the technology, the potential forms of abuse are limitless.

It does not take much imagination to picture a world where cameras are put up at every corner, tracking our every move, and matching our faces to a database in real-time to know who is where at all times. In such a world, the right to privacy is gone and mass surveillance in the public sphere is total.

China is one of the best - or should I say worst - examples when it comes to facial recognition and mass surveillance. A database leak by a Chinese facial recognition company shows the sheer extend of surveillance: Within 24 hours alone, there were more than 6.8 million locations logged to track people’s movements based on real-time facial recognition.

In China, everyone’s picture of the 1.4 billion citizens has ended up in the facial recognition database. There are hundreds of millions of surveillance cameras in China, and the number continues to grow - to realize China’s dytopian surveillance dream.

5. How to stop face recognition

Facial recognition is one of the most dangerous surveillance technologies. Consequently, we must ban facial recognition to defend privacy.

Fortunately, today you have multiple options to defend yourself against face recognition.

You can make sure not to upload personal pictures to the web. If there are no pictures about you that can be scraped, no database exists to which the surveillance cameras can match your face to. The problem, however, that the Clearview scandal has shown us is: Pictures from billions of people are already posted online on social media sites, and companies can scrape these pictures along with name tags to create a database.

On top of that, some people will want to upload pictures to websites or social media because it is part of their social life. Researchers have now found a great way to do this and still fool the facial recognition algorithms.

Fawkes

Fawkes is a tool that trains a facial recognition system to learn something wrong about you by slightly altering your pictures before uploading them to the web. This way the AI can no longer match the uploaded pictures to your real face. However, when re-testing the Fawkes software after a couple of months, the researchers recognized that Microsoft Azure’s facial recognition service was no longer spoofed by some of their images. Staying ahead of the facial recognition software is a cat-and-mouse game and will remain so as both technologies will improve.

You can download Fawkes here

LowKey

Another promising research project is LowKey. This software turns images into unlearnable examples. So when an AI performs a face recognition search, the facial recognition software ignores your pictures or selfies that have been slightly changed with LowKey entirely. The tool makes sure that the AI software learns nothing about you so that it does not have a database to which it can match your face to.

You can download LowKey here.

Take action

Fighting with technology against technology is a good way to protect our privacy. For instance, we at Tutanota fight against mass surveillance with end-to-end encryption, and successfully so.

However, as citizens of democratic countries we must always also make sure that our right to privacy is also defended in politics.

You can join the political fight to reclaim your face and sign this Reclaim Your Face petition.