Meta utilizará el análisis visual basado en IA para identificar a los usuarios menores de edad en Instagram y Facebook.

Meta utilizará tecnologías basadas en la inteligencia artificial, como el escaneo de fotografías, para detectar a los usuarios menores de edad mediante el análisis de indicios visuales como la estructura ósea. Aunque el uso de técnicas de escaneo basadas en la inteligencia artificial para determinar la edad de un usuario suscita preocupaciones en materia de privacidad, Meta afirma que «no se trata de reconocimiento facial».

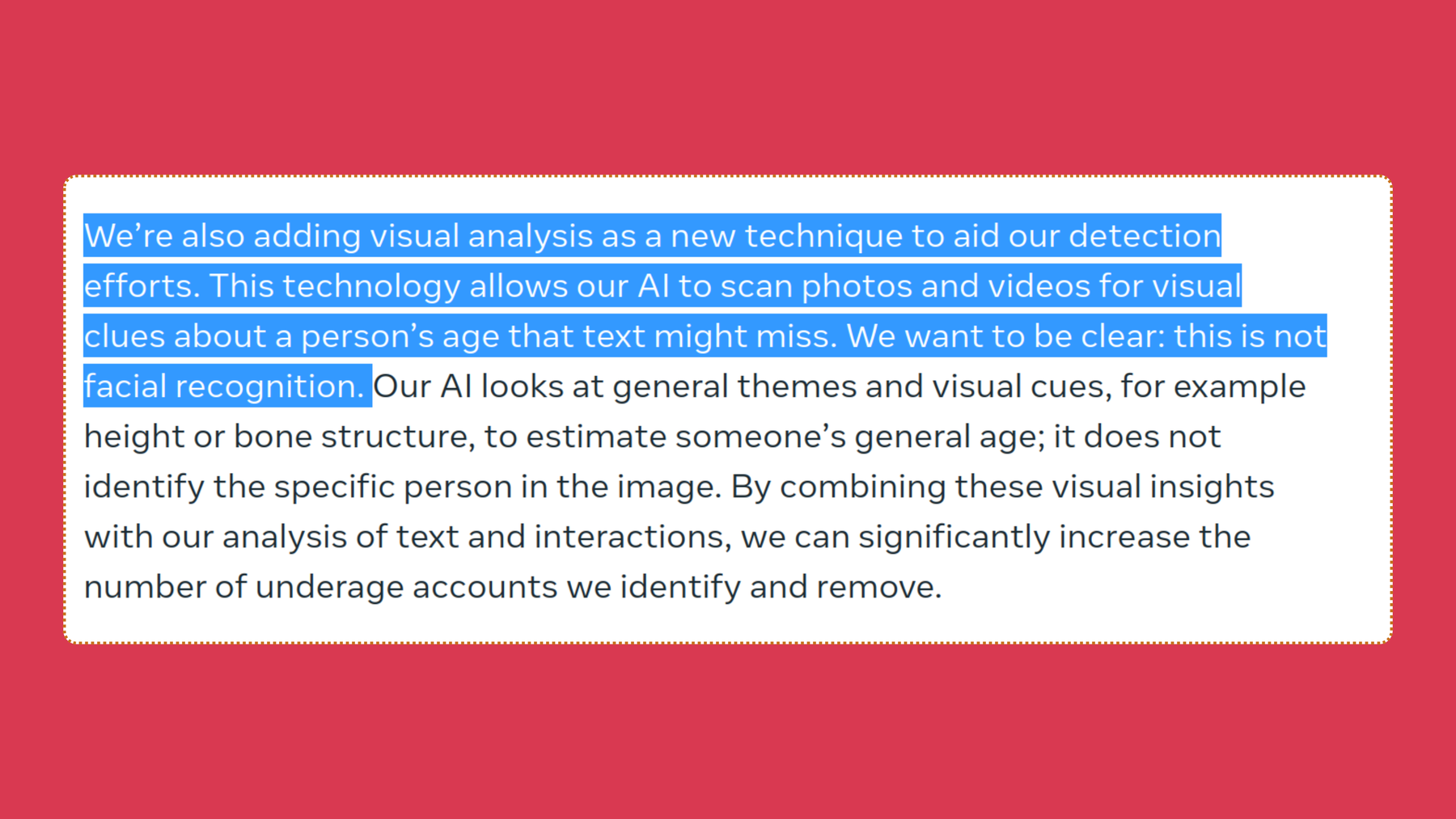

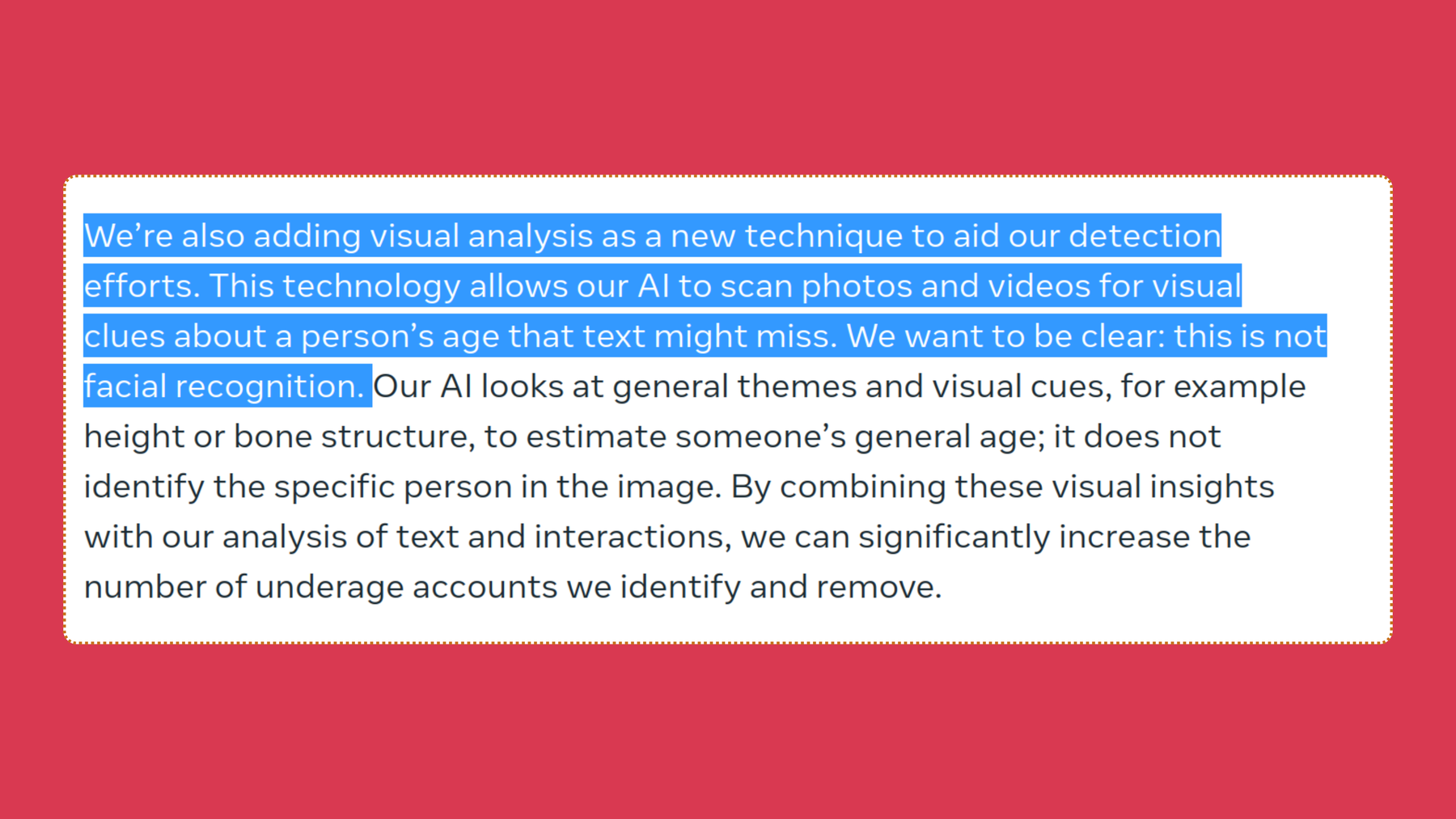

Meta utilizará sistemas avanzados de inteligencia artificial para detectar cuentas de menores de edad. La IA analizará los perfiles completos de los usuarios en busca de pistas contextuales que permitan estimar la edad basándose en el contexto. Por ejemplo, comentarios, pies de foto, biografías y publicaciones. Se utilizará el análisis visual para examinar fotos y vídeos en busca de «indicios visuales», como la estructura ósea y la estatura, con el fin de estimar la edad.

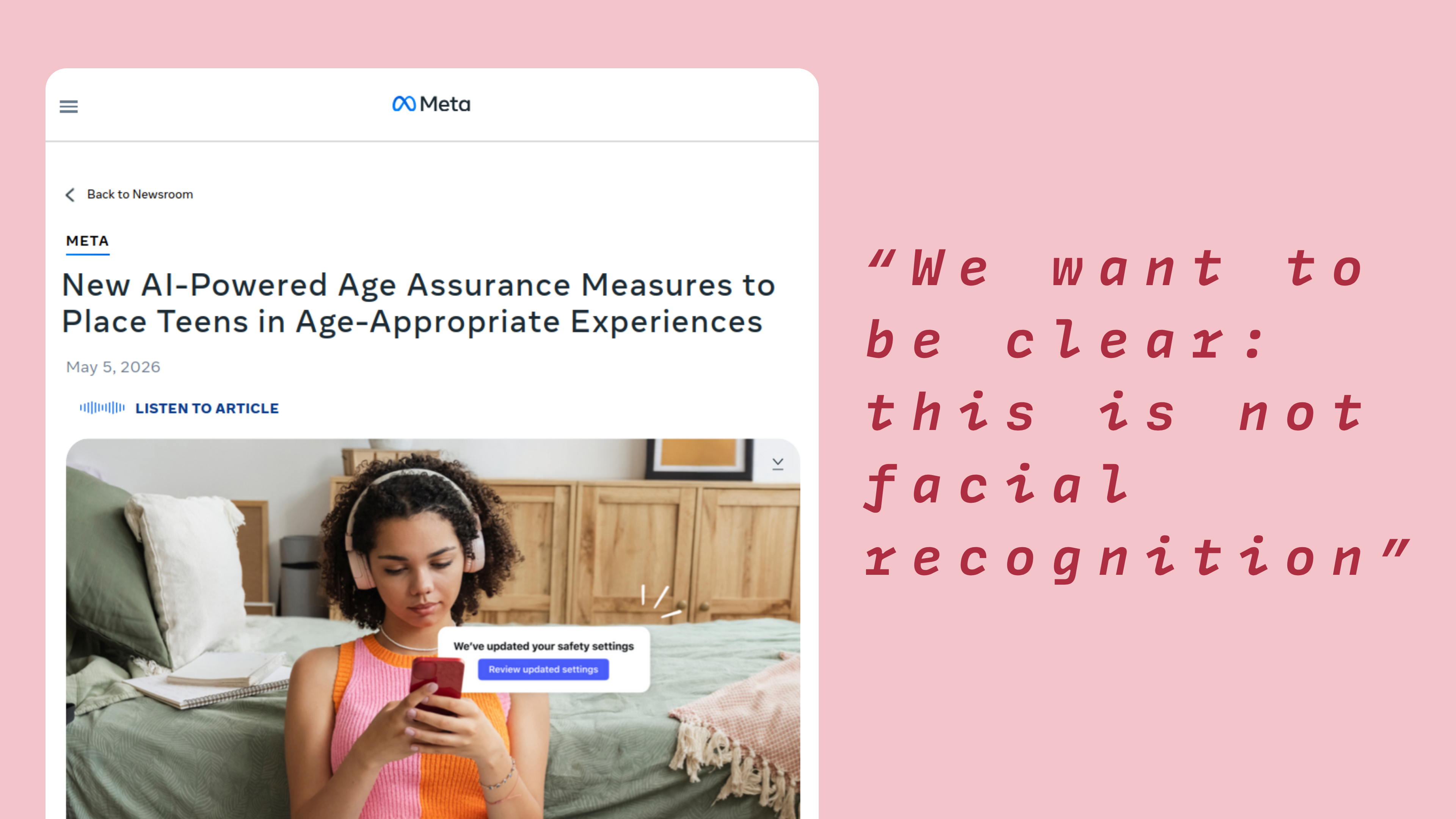

Aunque Meta utilizará el análisis visual con IA para escanear imágenes y vídeos, quiere dejar claro que no se trata de reconocimiento facial. Captura de pantalla: Meta

Meta utilizará la IA para analizarlo todo (solo para poder detectar a los usuarios menores de edad)

El 5 de mayo, Meta publicó un artículo en el que compartía sus planes de utilizar IA para escanear imágenes y vídeos en busca de pistas visuales, como la altura o la estructura ósea, con el fin de determinar si un usuario es menor de trece años. El uso de esta tecnología tiene como objetivo eliminar a los usuarios menores de edad de sus plataformas y trasladar a los adolescentes a tipos de cuentas más adecuadas, como las cuentas Meta Teens. Esto forma parte de las inversiones de Meta en su propia tecnología de verificación de edad. La gran empresa tecnológica afirma que «esto no es reconocimiento facial»:

«Queremos dejar claro que esto no es reconocimiento facial. Nuestra IA analiza rasgos generales y señales visuales, como la altura o la estructura ósea, para estimar la edad aproximada de una persona; no identifica a la persona concreta que aparece en la imagen», afirmó Meta en su entrada de blog.

Si Meta detecta que una cuenta podría pertenecer a un menor de edad, esta se desactivará y se pedirá al usuario que proporcione una prueba de edad a través de su proceso de verificación de edad; de lo contrario, la cuenta se eliminará. Por ahora, muchas de las mejoras de IA de Meta ya se están utilizando en todo el mundo, pero algunos de sus sistemas avanzados de IA, como el análisis visual, se utilizan actualmente solo en países específicos, mientras Meta trabaja para lograr un despliegue más amplio.

Meta ampliará las cuentas automáticas para adolescentes

En 2024, Meta introdujo sus cuentas para adolescentes en Instagram, y el gigante tecnológico de Silicon Valley ha ido implementando gradualmente estas cuentas en Facebook y Messenger, para sus usuarios de entre 13 y 17 años en diferentes regiones. El objetivo de estas cuentas, creadas especialmente para ofrecer una experiencia más adecuada a los adolescentes, es mejorar la privacidad, la seguridad y el bienestar de los usuarios de Meta menores de 18 años. En la entrada del blog, Meta también anunció sus planes de ampliar su tecnología que traslada automáticamente a los usuarios que identifica como adolescentes a las protecciones de las cuentas para adolescentes en Instagram en la UE y Brasil, y en Facebook en EE. UU.

¿Se puede confiar en Meta?

El gigante tecnológico ha afirmado que este tipo de análisis visual mediante IA no es reconocimiento facial, pero la idea de que cada clic, comentario, publicación e interacción de un usuario —por no hablar de las imágenes y los vídeos— pueda ser escaneada y analizada por los sistemas de IA de Meta puede no sentar bien a mucha gente. Esto es comprensible, ya que Meta ha sido objeto de atención en múltiples ocasiones por diferentes tipos de escándalos, violaciones de la privacidad de los usuarios y prácticas poco éticas.

Desde la inclusión por defecto de los usuarios en el uso de sus datos de Facebook e Instagram para entrenar sus modelos de IA, hasta ser demandada por 30 estados de EE. UU. por supuestamente crear de forma consciente y deliberada en 2023 funciones en Facebook e Instagram que eran adictivas y perjudiciales para la salud mental de los jóvenes, pasando por su infame escándalo de Cambridge Analytica, en el que se recopilaron los datos de millones de usuarios de Facebook y se utilizaron para publicidad política dirigida.

Para colmo, en marzo de este año, Meta fue condenada a pagar 375 millones de dólares por engañar a los usuarios sobre la seguridad de sus plataformas y por no proteger a los niños en Internet, infringiendo la ley estatal de Nuevo México (EE. UU.).

Así que, cuando se analiza la trayectoria de Meta, especialmente ahora que sabemos que todas las grandes tecnológicas compiten por desarrollar los modelos de IA más avanzados con la ayuda de enormes cantidades de datos de los usuarios, es comprensible que estos se muestren escépticos ante el uso por parte de Meta del análisis visual con IA de sus datos. Si nos fijamos en otros escándalos recientes de abuso de datos de IA —como el de LinkedIn, que utilizó tus datos para entrenar su IA sin pedir consentimiento, o el de Google, que te presionaba para que usaras su IA Gemini en Android—, no es de extrañar que la última decisión de Meta pueda no tener como único objetivo la protección de niños y adolescentes.

La era de la verificación de la edad

Este año se ha producido un impulso aún mayor a nivel mundial en favor de la verificación de la edad y en varios países se están introduciendo prohibiciones del uso de redes sociales para los adolescentes. Ahora, los gigantes tecnológicos como Meta y Google tienen que cumplir con la normativa o afrontar las consecuencias. Como resultado, se están introduciendo diferentes tipos de sistemas de garantía y verificación de la edad; por ejemplo, YouTube ahora exige a los usuarios que verifiquen su edad, y Discord también tiene previsto implementar un sistema de verificación de la edad.

Si bien es comprensible que las grandes empresas tecnológicas como Meta tengan que implantar estos sistemas para cumplir con la legislación, los usuarios de estas plataformas deben ser cautelosos y preguntarse si pueden confiar en que las empresas respeten y protejan verdaderamente sus datos personales, como los documentos de identidad oficiales, al verificar su identidad.

En la era de la verificación de la edad, debemos preguntarnos: ¿Realmente necesitamos la verificación de la edad para proteger a los adolescentes de las consecuencias negativas de las redes sociales, o debemos cambiar los algoritmos que las rigen y que son la causa principal del impacto negativo que estas tienen en la salud mental de las personas?